AI Test Automation: Accelerating DevOps Velocity

Jonestown, United States – February 16, 2026 / Test Quality /

Smart Testing Strategies Help Engineering Teams Ship Faster Without Breaking Things

AI test automation is redefining how engineering teams deliver software at scale.

-

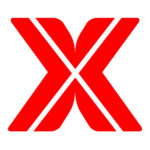

Organizations integrating Gen AI into quality engineering report 72% faster automation processes.

-

Over 68% of organizations are actively using or developing roadmaps for AI in testing workflows.

-

Intelligent test selection and self-healing capabilities eliminate the bottlenecks that traditionally slow release cycles.

Organizations that integrate AI into their QA pipelines position themselves to compete in markets where deployment frequency separates winners from laggards.

Software release velocity has become a competitive differentiator, and traditional testing approaches can’t keep up. According to the Capgemini World Quality Report 2024-25, 72% of organizations report faster automation processes after integrating Gen AI into their quality engineering workflows, while 68% are actively using or planning Gen AI implementations.

The pressure to ship faster isn’t letting up. High-performing DevOps teams deploy code hundreds of times more frequently than their peers, and that gap keeps widening. For QA professionals and developers alike, the question is how quickly you can integrate AI test automation before your release cycles become a liability.

What Makes AI Test Automation Different from Traditional Approaches?

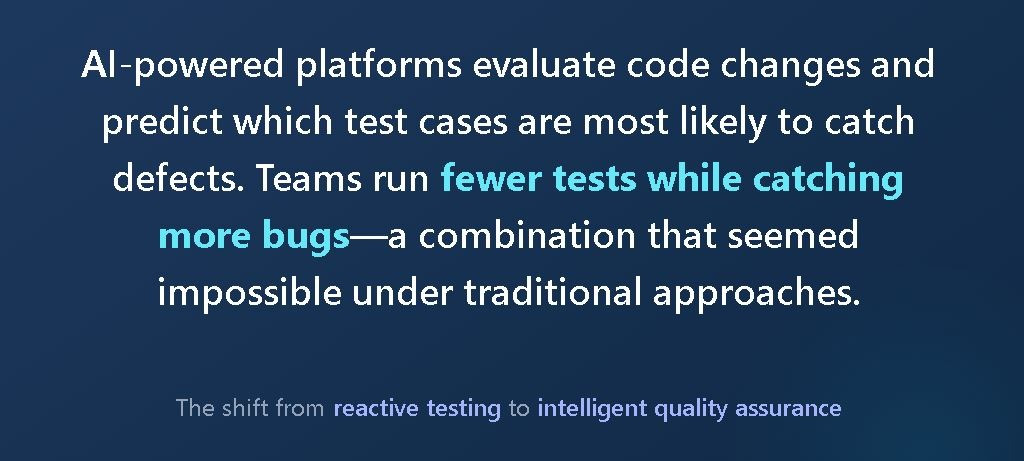

Traditional test automation operates on a straightforward premise: write scripts, execute them, and fix whatever breaks. The problem is that modern applications change constantly, and those brittle scripts break just as frequently. Teams spend more time maintaining tests than actually testing, creating a frustrating cycle that undermines the entire purpose of automation.

AI test automation introduces intelligence where there was only rigidity. Machine learning algorithms analyze application behavior, identify patterns, and automatically adapt test scripts when UI elements shift or workflows change. This self-healing capability alone transforms testing economics. Instead of dedicating engineering hours to chasing flaky tests, teams focus on building features that matter.

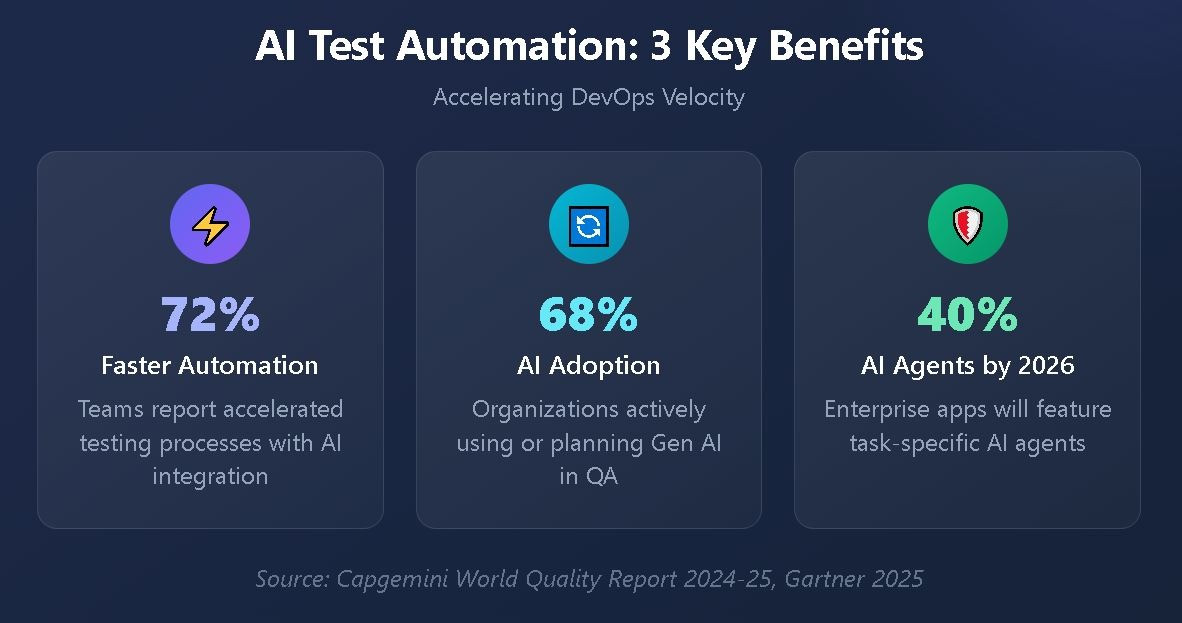

Beyond maintenance reduction, AI-powered platforms evaluate code changes and predict which test cases are most likely to catch defects. Teams run fewer tests while catching more bugs, a combination that seemed impossible under traditional approaches. Risk-based prioritization ensures critical paths receive attention first, accelerating feedback without sacrificing coverage.

Modern AI testing tools also understand context in ways that scripted automation cannot. They recognize when visual changes are intentional design updates versus actual defects, reducing false positives that erode developer trust in automated results.

How Does AI Accelerate the DevOps Software Pipeline?

DevOps success depends on fast feedback loops. When developers commit code, they need immediate signals about whether their changes introduce problems. Traditional testing creates bottlenecks because comprehensive test suites take hours to complete. Engineers either wait for results or proceed without confidence, neither option serving the goal of rapid, reliable delivery.

AI software pipeline integration makes testing smarter, not just faster. Machine learning models analyze historical test data, code change patterns, and defect distributions to determine exactly which tests need to run for any given commit. A change to the payment module doesn’t require re-running authentication tests. AI recognizes these relationships and optimizes execution accordingly.

Continuous testing within DevOps workflows becomes genuinely continuous when AI handles the orchestration. Tests execute in parallel across cloud environments, results stream back in real time, and quality gates make automated decisions about deployment readiness. Human intervention shifts from routine monitoring to exception handling.

Gartner predicts that 40% of enterprise applications will feature task-specific AI agents by 2026. Testing is one of the clearest use cases for this agentic approach. AI agents that autonomously generate tests, execute them, analyze results, and propose fixes change the velocity equation.

Traditional Testing vs AI-Powered Testing

Understanding the practical differences helps teams evaluate where AI delivers the most value. The comparison below highlights key capability shifts:

|

Capability |

Traditional Automation |

AI-Powered Automation |

|

Test Maintenance |

Manual updates required for UI changes |

Self-healing adapts to changes automatically |

|

Test Selection |

Run full regression suite |

Intelligent selection based on risk and change analysis |

|

Defect Detection |

Pattern-matching against expected results |

Anomaly detection identifies unexpected behaviors |

|

Execution Speed |

Sequential or limited parallelism |

Dynamic cloud scaling with optimized distribution |

|

Feedback Timing |

Hours for comprehensive results |

Minutes with prioritized critical-path testing |

|

Coverage Analysis |

Code coverage metrics |

Behavioral coverage with usage pattern integration |

Five Ways AI Test Automation Accelerates DevOps Velocity

The benefits of AI-powered testing extend across the entire delivery pipeline. Here’s how organizations are achieving measurable QA automation acceleration:

-

Eliminating test maintenance overhead — Self-healing tests adapt to application changes without manual intervention, freeing engineers to focus on feature development instead of script repairs.

-

Reducing test execution time — Intelligent test selection runs only the tests that matter for each code change, compressing hours-long regression suites into minutes-long targeted validations.

-

Catching defects earlier — AI models trained on historical patterns predict where bugs are most likely to appear, enabling shift-left strategies that find issues before they compound.

-

Improving test coverage accuracy — Machine learning identifies gaps in test coverage by analyzing actual user behavior patterns, not just code paths.

-

Enabling continuous quality gates — Automated decision-making at pipeline checkpoints ensures only quality code progresses, eliminating the manual approval bottlenecks that slow releases.

What Role Do DevOps Testing Tools Play in QA Automation Acceleration?

DevOps testing tools have evolved as teams demand tighter integration between quality assurance and delivery pipelines. Modern platforms don’t treat testing as a separate phase but embed it throughout development workflows.

Test management platforms that integrate natively with GitHub and Jira eliminate context switching that fragments team productivity. When defects link directly to code commits and user stories, traceability happens automatically rather than requiring manual documentation.

CI/CD integration capabilities have become table stakes. Jenkins, CircleCI, GitHub Actions, and similar platforms expect testing tools to automatically trigger, report results in standardized formats, and provide pass/fail signals for deployment gates. The most effective solutions upload results directly into unified dashboards where both developers and QA engineers see the same quality picture.

TestQuality approaches this integration challenge as a foundational design principle rather than an afterthought. Built specifically for GitHub and Jira workflows, it connects test management with defect tracking, CI/CD pipelines, and automation frameworks in a seamless experience. Teams using Selenium, Playwright, Cucumber, or any of the dozens of supported frameworks can automatically import results and maintain complete visibility across manual and automated testing efforts.

How Do Teams Balance Human Expertise with AI Testing Capabilities?

The rise of AI in testing amplifies what skilled testers can accomplish. AI excels at repetitive tasks, pattern recognition, and processing vast amounts of data. Humans excel at creative thinking, understanding user intent, and making nuanced decisions about quality tradeoffs.

Effective organizations design workflows where AI handles the heavy lifting while humans focus on high-value activities. Exploratory testing, usability evaluation, and edge case identification remain fundamentally human endeavors. AI test automation augments these efforts by ensuring comprehensive baseline coverage, freeing testers to investigate areas that require intuition and creativity.

The collaboration extends to test creation itself. AI-powered test generation tools can convert user stories and requirements into comprehensive test cases in seconds, but experienced testers review and refine these suggestions. TestQuality’s integration with TestStory.ai demonstrates this approach, using AI to accelerate test case creation while keeping humans in the loop for quality assurance.

Over 51% of development teams have now adopted DevOps practices. This rapid adoption creates demand for testing approaches that scale with increased deployment frequency. Teams that combine AI capabilities with human expertise achieve the velocity their organizations demand without sacrificing the quality their customers expect.

FAQ

How does AI test automation improve CI/CD pipeline efficiency? AI test automation improves CI/CD efficiency by intelligently selecting which tests to run based on code changes, reducing execution time while maintaining coverage. Self-healing capabilities eliminate test failures caused by UI changes, and predictive analytics identify high-risk areas requiring immediate attention.

What skills do QA engineers need to work with AI testing tools? QA engineers benefit from understanding machine learning concepts, data analysis fundamentals, and how to configure AI testing platforms. However, most modern AI testing tools allow teams to leverage capabilities without deep technical expertise.

Can AI testing completely replace manual testing? AI testing complements rather than replaces manual testing. While AI excels at regression testing, pattern detection, and repetitive validation, human testers remain essential for exploratory testing, usability evaluation, and scenarios requiring creative thinking or contextual judgment.

How do teams measure the ROI of AI test automation investments? Teams measure AI testing ROI through metrics like test maintenance time reduction, defect escape rate improvements, deployment frequency increases, and mean time to detect issues. Organizations typically see measurable improvements within the first few months of adoption.

Transform Your Testing Velocity with Intelligent QA

The shift toward AI test automation is a strategic repositioning of quality assurance within the software delivery lifecycle. Teams that embrace these capabilities gain deployment velocity, reduce operational overhead, and catch defects before they reach production.

With native GitHub and Jira integration, comprehensive support for test automation frameworks, and AI-driven test case generation through TestStory.ai, TestQuality provides the AI-powered QA platform that modern development teams need.

Contact Information:

Test Quality

8921 Northlake Hills Dr

Jonestown, TX

United States

Test Quality Support

https://testquality.com